| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 |

- 딥러닝

- 리액트 네이티브

- 백준 4949번

- 깃허브 토큰 인증

- 리액트 네이티브 시작하기

- 지네릭스

- HTTP

- 백준 4358 자바

- 모두의 네트워크

- 데베

- 스터디

- 깃 연동

- 네트워크

- 리액트 네이티브 프로젝트 생성

- 모두의네트워크

- 자바

- 문자열

- 모두를위한딥러닝

- React Native

- SQL

- 백준 4358번

- 백준 5525번

- 팀플회고

- 데이터베이스

- 깃 터미널 연동

- 머신러닝

- 모두를 위한 딥러닝

- 깃허브 로그인

- 백준

- 정리

- Today

- Total

솜이의 데브로그

Google Cloud Study Jam Kubernetes Lab4 본문

Lab4 : Managing Deployments Using Kubernetes Engine

Goal

- provide practice in sacling and managing containers so accomplish common scenarios where multiple heterogeneous deployments are being used.

What you'll do

- Practice with kubectl tool

- Create deployment yaml files

- Launch, update, and scale deployments

- Practice with updating deployments and deployment styles

heterogeneous deployments include those that span regions within a single cloud environment, multiple public cloud environments (multi-cloud), or a combination of on-premises and public cloud environments (hybrid or public-private).

Heterogeneous deployments can help address these challenges, but they must be architected using programmatic and deterministic processes and procedures. One-off or ad-hoc deployment procedures can cause deployments or processes to be brittle and intolerant of failures. Ad-hoc processes can lose data or drop traffic. Good deployment processes must be repeatable and use proven approaches for managing provisioning, configuration, and maintenance.

Sample code 얻기

gsutil -m cp -r gs://spls/gsp053/orchestrate-with-kubernetes .

cd orchestrate-with-kubernetes/kubernetes

gcloud container clusters create bootcamp --num-nodes 5 --scopes "<https://www.googleapis.com/auth/projecthosting,storage-rw>"

Create a cluster with five n1-standard-1 nodes

Learn about the deployment object

Let's get started with Deployments. First let's take a look at the Deployment object. The explain command in kubectl can tell us about the Deployment object.

kubectl explain deployment

kubectl explain deployment --recursive

--recursive option shows all of the fields.

kubectl explain deployment.metadata.name

You can use the explain command as you go through the lab to help you understand the structure of a Deployment object and understand what the individual fields do.

Create a deployment

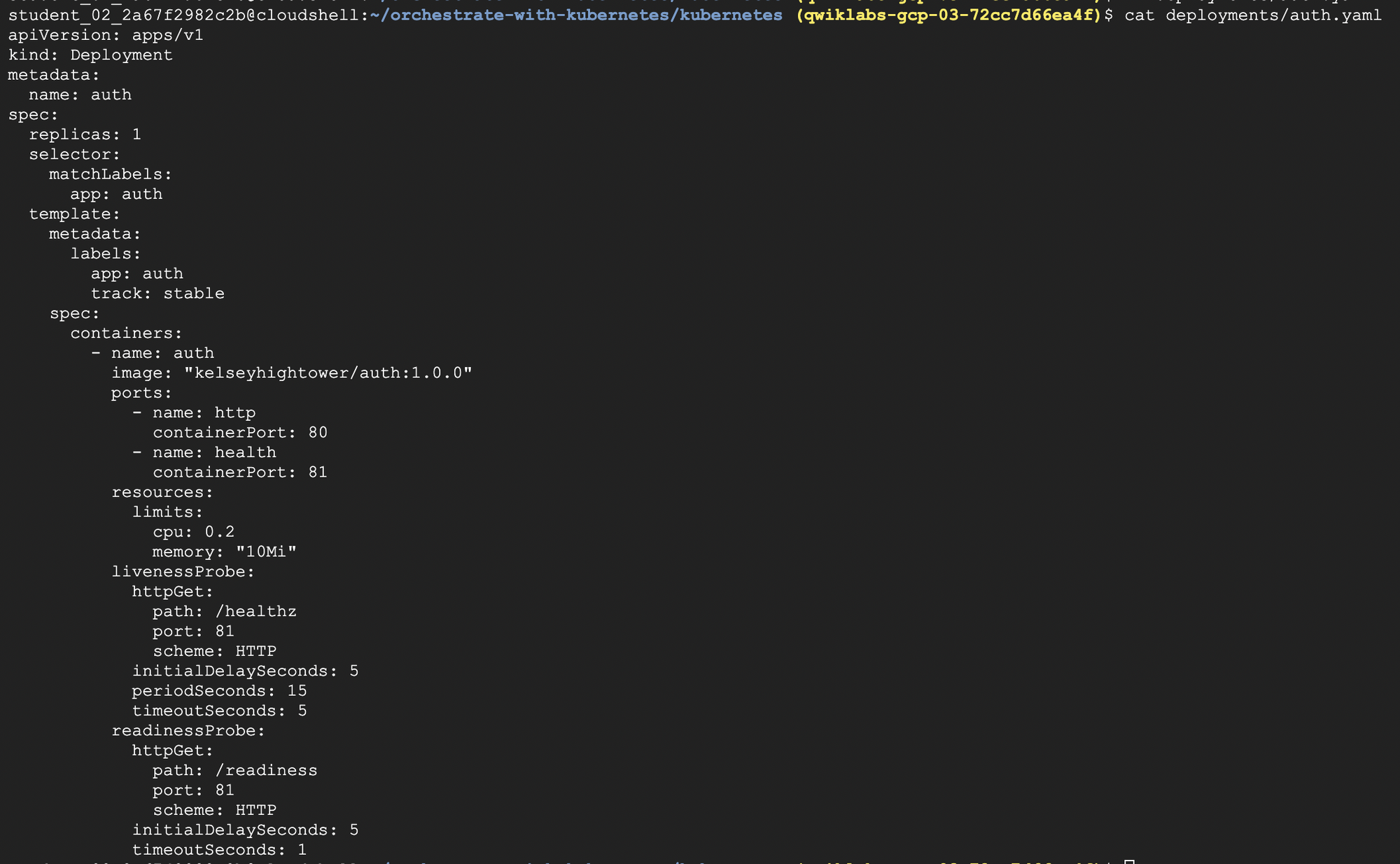

vi deployments/auth.yaml

Update the deployments/auth.yaml configuration file

Change the image file into version 1.0.0

When you run the kubectl create command to create the auth deployment, it will make one pod that conforms to the data in the Deployment manifest. This means we can scale the number of Pods by changing the number specified in the replicas field.

kubectl create -f deployments/auth.yaml

create your deployment object using kubectl create

kubectl get deployments

Once you have created the Deployment, you can verify that it was created.

kubectl get replicasets

Once the deployment is created, Kubernetes will create a ReplicaSet for the Deployment. We can verify that a ReplicaSet was created for our Deployment:

kubectl get pods

Finally, we can view the Pods that were created as part of our Deployment. The single Pod is created by the Kubernetes when the ReplicaSet is created.

kubectl create -f services/auth.yaml

kubectl create -f deployments/hello.yaml

kubectl create -f services/hello.yaml

create a service for our auth deployment. Use the kubectl create command to create the auth service.

Now, do the same thing to create and expose the hello Deployment.

kubectl create secret generic tls-certs --from-file tls/

kubectl create configmap nginx-frontend-conf --from-file=nginx/frontend.conf

kubectl create -f deployments/frontend.yaml

kubectl create -f services/frontend.yaml

And one more time to create and expose the frontend Deployment.

You created a ConfigMap for the frontend.

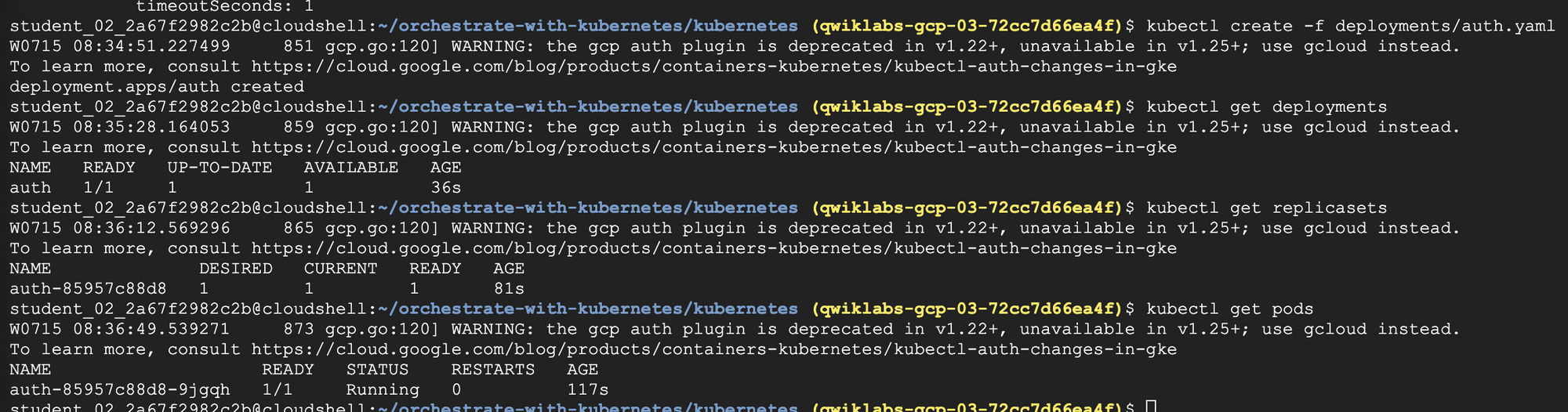

kubectl get services frontend

Interact with the frontend by grabbing its external IP and then curling to it.

curl -ks https://<EXTERNAL-IP>

And you get the hello response back.

curl -ks <https://`kubectl> get svc frontend -o=jsonpath="{.status.loadBalancer.ingress[0].ip}"`

You can also use the output templating feature of kubectl to use curl as a one-liner:

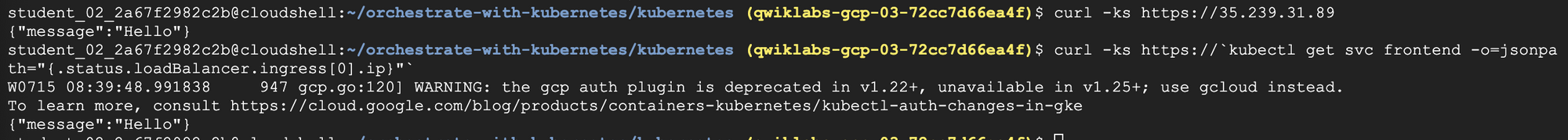

Scale a Deployment

kubectl explain deployment.spec.replicas

Now that we have a Deployment created, we can scale it. Do this by updating the spec.replicas field. You can look at an explanation of this field using the kubectl explaincommand again.

The replicas field can be most easily updated using the kubectl scale command:

kubectl scale deployment hello --replicas=5

After the Deployment is updated, Kubernetes will automatically update the associated ReplicaSet and start new Pods to make the total number of Pods equal 5.

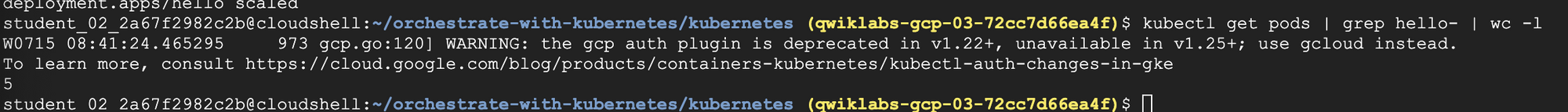

kubectl get pods | grep hello- | wc -l

Now scale back the application:

kubectl scale deployment hello --replicas=3

Rolling update

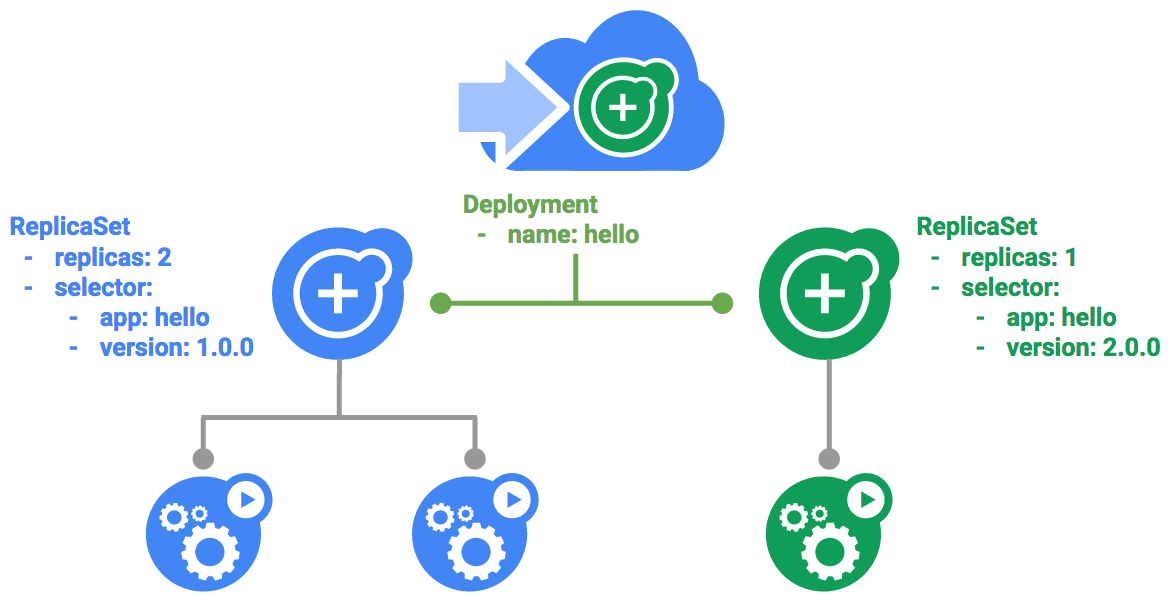

Deployments support updating images to a new version through a rolling update mechanism. When a Deployment is updated with a new version, it creates a new ReplicaSet and slowly increases the number of replicas in the new ReplicaSet as it decreases the replicas in the old ReplicaSet.

the updated Deployment will be saved to your cluster and Kubernetes will begin a rolling update.

kubectl rollout history deployment/hello

You can also see a new entry in the rollout history

Pause a rolling update

If you detect problems with a running rollout, pause it to stop the update.

kubectl rollout pause deployment/hello

kubectl rollout status deployment/hello

Verify the current state of the rollout

You can also verify this on the Pods directly:

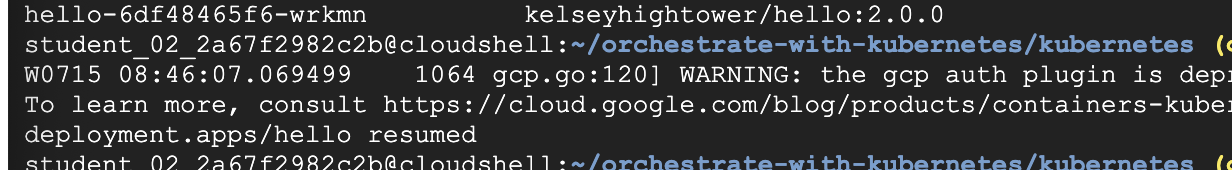

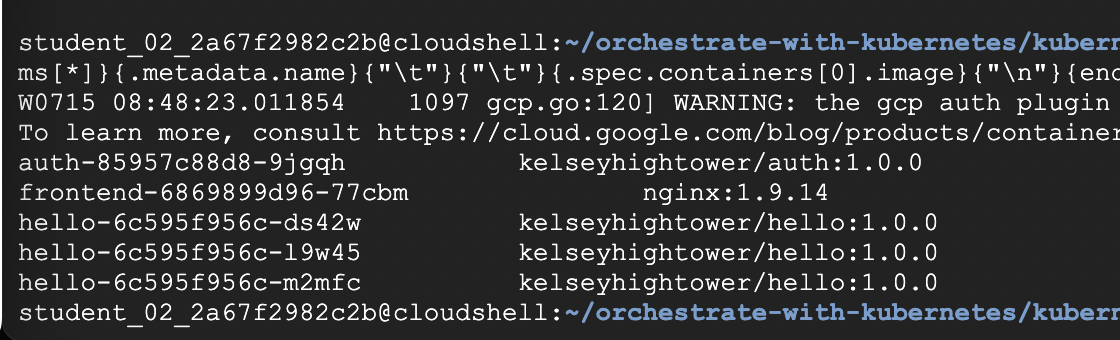

kubectl get pods -o jsonpath --template='{range .items[*]}{.metadata.name}{"\\t"}{"\\t"}{.spec.containers[0].image}{"\\n"}{end}'

Resume a rolling update

The rollout is paused which means that some pods are at the new version and some pods are at the older version. We can continue the rollout using the resume command.

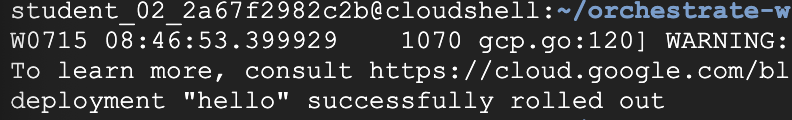

kubectl rollout resume deployment/hello

kubectl rollout status deployment/hello

Rollback an update

Assume that a bug was detected in your new version. Since the new version is presumed to have problems, any users connected to the new Pods will experience those issues.

You will want to roll back to the previous version so you can investigate and then release a version that is fixed properly.

Use the rollout command to roll back to the previous version:

kubectl rollout undo deployment/hello

Verify the roll back in the history:

kubectl rollout history deployment/hello

kubectl get pods -o jsonpath --template='{range .items[*]}{.metadata.name}{"\\t"}{"\\t"}{.spec.containers[0].image}{"\\n"}{end}'

Canary deployments

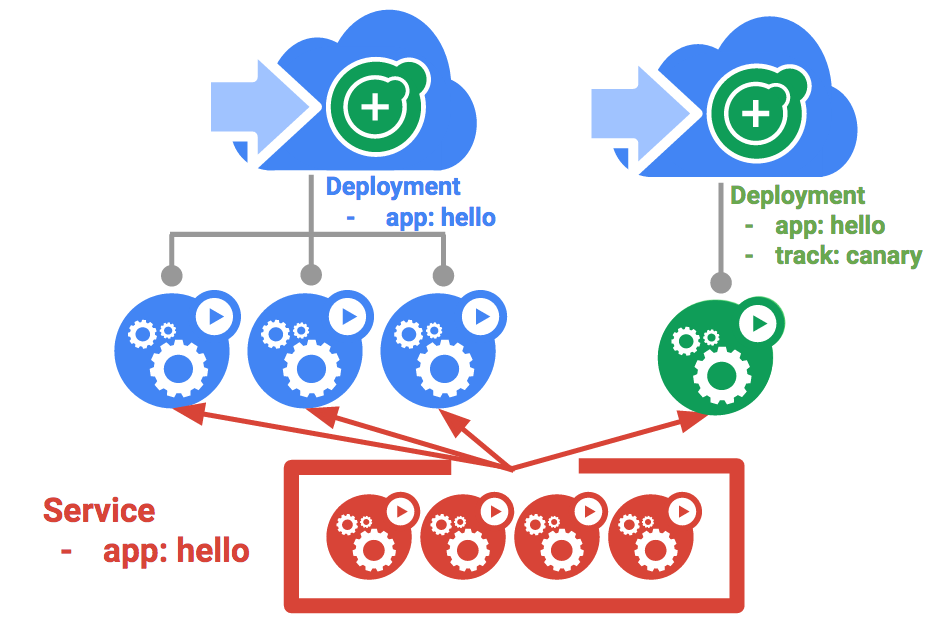

When you want to test a new deployment in production with a subset of your users, use a canary deployment. Canary deployments allow you to release a change to a small subset of your users to mitigate risk associated with new releases.

Create a canary deployment

A canary deployment consists of a separate deployment with your new version and a service that targets both your normal, stable deployment as well as your canary deployment

Create the canary deployment :

kubectl create -f deployments/hello-canary.yaml

After the canary deployment is created, you should have two deployments, hello and hello-canary. Verify it with this kubectl command:

kubectl get deployments

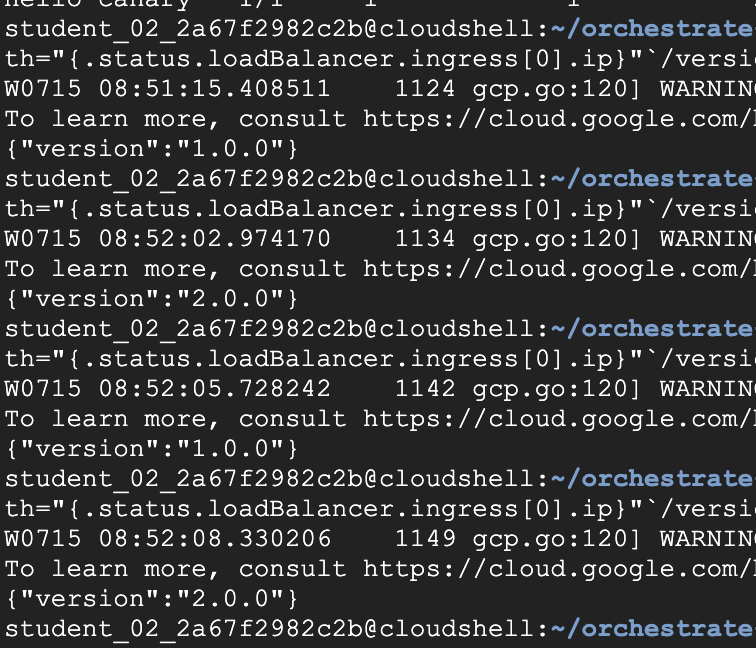

Verify the canary deployment

You can verify the hello version being served by the request:

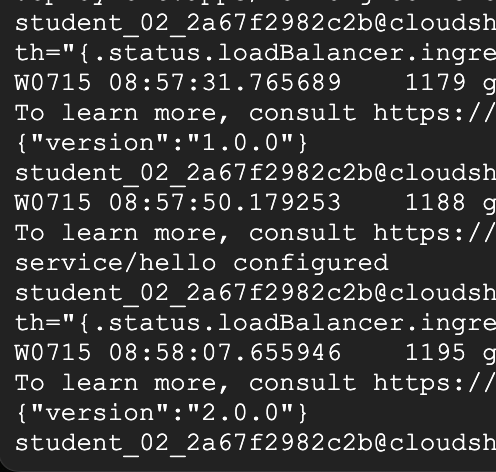

curl -ks <https://`kubectl> get svc frontend -o=jsonpath="{.status.loadBalancer.ingress[0].ip}"`/version

Run this several times and you should see that some of the requests are served by hello 1.0.0 and a small subset (1/4 = 25%) are served by 2.0.0.

Canary deployments in production - session affinity

In this lab, each request sent to the Nginx service had a chance to be served by the canary deployment. But what if you wanted to ensure that a user didn't get served by the Canary deployment? A use case could be that the UI for an application changed, and you don't want to confuse the user. In a case like this, you want the user to "stick" to one deployment or the other.

You can do this by creating a service with session affinity. This way the same user will always be served from the same version. In the example below the service is the same as before, but a new sessionAffinity field has been added, and set to ClientIP. All clients with the same IP address will have their requests sent to the same version of the hello application.

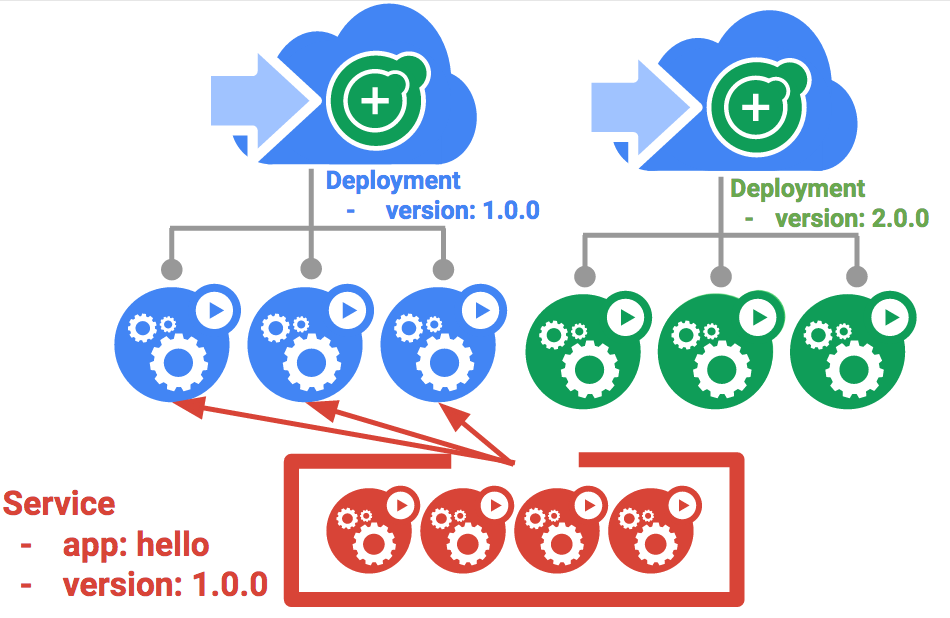

Blue-green deployments

Rolling updates are ideal because they allow you to deploy an application slowly with minimal overhead, minimal performance impact, and minimal downtime. There are instances where it is beneficial to modify the load balancers to point to that new version only after it has been fully deployed. In this case, blue-green deployments are the way to go.

Kubernetes achieves this by creating two separate deployments; one for the old "blue" version and one for the new "green" version. Use your existing hello deployment for the "blue" version. The deployments will be accessed via a Service which will act as the router. Once the new "green" version is up and running, you'll switch over to using that version by updating the Service.

The service

Use the existing hello service, but update it so that it has a selector app:hello , version: 1.0.0 . The selector will match the existing "blue" deployment. But it will not match the "green" deployment because it will use a different version.

kubectl apply -f services/hello-blue.yaml

Updating using Blue-Green Deployment

In order to support a blue-green deployment style, we will create a new "green" deployment for our new version. The green deployment updates the version label and the image path.

Create the green deployment:

kubectl create -f deployments/hello-green.yaml

Once you have a green deployment and it has started up properly, verify that the current version of 1.0.0 is still being used:

curl -ks <https://`kubectl> get svc frontend -o=jsonpath="{.status.loadBalancer.ingress[0].ip}"`/version

Now, update the service to point to the new version:

kubectl apply -f services/hello-green.yaml

When the service is updated, the "green" deployment will be used immediately. You can now verify that the new version is always being used.

curl -ks <https://`kubectl> get svc frontend -o=jsonpath="{.status.loadBalancer.ingress[0].ip}"`/version

Blue-Green Rollback

If necessary, you can roll back to the old version in the same way. While the "blue" deployment is still running, just update the service back to the old version.

kubectl apply -f services/hello-blue.yaml

Once you have updated the service, your rollback will have been successful. Again, verify that the right version is now being used:

curl -ks <https://`kubectl> get svc frontend -o=jsonpath="{.status.loadBalancer.ingress[0].ip}"`/version

'dev > etc' 카테고리의 다른 글

| Github Action 이용해서 Spring 프로젝트를 EC2에 배포하기 (ECR, ECS 이용) (0) | 2022.12.07 |

|---|---|

| Google Cloud Study Jam Kubernetes Lab5 (0) | 2022.11.03 |

| Google Cloud Study Jam Kubernetes Lab3 (0) | 2022.10.26 |

| Google Cloud Study Jam Kubernetes Lab2 (0) | 2022.10.26 |

| Google Cloud Study Jam Kubernetes Lab1 (0) | 2022.10.26 |